使用 Elasticsearch 记录日志

Elasticsearch 是一个流行的基于 JSON 的数据存储,用于存储和索引大量数据。它通常用于存储来自各种来源的日志,并与 Logstash 和 Kibana 等工具配合使用,形成一个完整的可观测性堆栈,称为 Elastic (ELK) Stack。

APISIX 支持通过 elasticsearch-logger 插件将日志直接转发到 Elasticsearch。这些日志随后可以通过 Kibana 进行搜索、过滤和可视化,以收集用于管理应用程序的见解。

本指南将向你展示如何启用 elasticsearch-logger 插件,将 APISIX 与 ELK 堆栈集成以实现可观测性。

前置条件

启动 Elasticsearch 和 Kibana

APISIX 目前支持 Elasticsearch 7.x 及以下版本。本指南对 Elasticsearch 和 Kibana 均使用 7.17.1 版本。

- Docker

- Kubernetes

在 Docker 中启动一个 Elasticsearch 实例:

docker run -d \

--name elasticsearch \

--network apisix-quickstart-net \

-v elasticsearch_vol:/usr/share/elasticsearch/data/ \

-p 9200:9200 \

-p 9300:9300 \

-e ES_JAVA_OPTS="-Xms512m -Xmx512m" \

-e discovery.type=single-node \

-e xpack.security.enabled=false \

docker.elastic.co/elasticsearch/elasticsearch:7.17.1

在 Docker 中启动一个 Kibana 实例以可视化 Elasticsearch 中的索引数据:

docker run -d \

--name kibana \

--network apisix-quickstart-net \

-p 5601:5601 \

-e ELASTICSEARCH_HOSTS="http://elasticsearch:9200" \

docker.elastic.co/kibana/kibana:7.17.1

为 Elasticsearch 创建一个 Kubernetes 清单文件:

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

namespace: ingress-apisix

name: elasticsearch-data

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

---

apiVersion: apps/v1

kind: Deployment

metadata:

namespace: ingress-apisix

name: elasticsearch

spec:

replicas: 1

selector:

matchLabels:

app: elasticsearch

template:

metadata:

namespace: ingress-apisix

labels:

app: elasticsearch

spec:

containers:

- name: elasticsearch

image: docker.elastic.co/elasticsearch/elasticsearch:7.17.1

ports:

- containerPort: 9200

- containerPort: 9300

env:

- name: ES_JAVA_OPTS

value: "-Xms512m -Xmx512m"

- name: discovery.type

value: "single-node"

- name: xpack.security.enabled

value: "false"

volumeMounts:

- name: elasticsearch-storage

mountPath: /usr/share/elasticsearch/data

volumes:

- name: elasticsearch-storage

persistentVolumeClaim:

claimName: elasticsearch-data

---

apiVersion: v1

kind: Service

metadata:

namespace: ingress-apisix

name: elasticsearch

spec:

selector:

app: elasticsearch

ports:

- name: http

port: 9200

targetPort: 9200

- name: transport

port: 9300

targetPort: 9300

type: ClusterIP

为 Kibana 创建另一个 Kubernetes 清单文件:

apiVersion: apps/v1

kind: Deployment

metadata:

namespace: ingress-apisix

name: kibana

spec:

replicas: 1

selector:

matchLabels:

app: kibana

template:

metadata:

namespace: ingress-apisix

labels:

app: kibana

spec:

containers:

- name: kibana

image: docker.elastic.co/kibana/kibana:7.17.1

ports:

- containerPort: 5601

env:

- name: ELASTICSEARCH_HOSTS

value: "http://elasticsearch:9200"

---

apiVersion: v1

kind: Service

metadata:

namespace: ingress-apisix

name: kibana

spec:

selector:

app: kibana

ports:

- name: http

port: 5601

targetPort: 5601

type: ClusterIP

将配置应用到你的集群:

kubectl apply -f elasticsearch.yaml -f kibana.yaml

将 Kibana 仪表板的服务端口转发到你的本地机器端口:

kubectl port-forward svc/kibana 5601:5601 &

如果成功,你应该能在 localhost:5601 上看到 Kibana Web 仪表板。

启用 elasticsearch-logger 插件

全局启用 elasticsearch-logger 并创建一个示例路由以生成日志。或者,你可以在路由上启用该插件。

- Admin API

- ADC

- Ingress Controller

在所有路由上启用 elasticsearch-logger 插件:

curl "http://127.0.0.1:9180/apisix/admin/global_rules/" -X PUT -d '

{

"id": "elasticsearch",

"plugins": {

"elasticsearch-logger": {

"endpoint_addrs": ["http://elasticsearch:9200"],

"field": {

"index": "gateway",

"type": "logs"

},

"ssl_verify": false,

"timeout": 60,

"retry_delay": 1,

"buffer_duration": 60,

"max_retry_count": 0,

"batch_max_size": 5,

"inactive_timeout": 5

}

}

}'

创建一个你将收集日志的示例路由:

curl -i "http://127.0.0.1:9180/apisix/admin/routes" -X PUT -d '

{

"id": "getting-started-ip",

"uri": "/ip",

"upstream": {

"nodes": {

"httpbin.org:80":1

},

"type": "roundrobin"

}

}'

全局启用 elasticsearch-logger 插件并创建一个你将收集日志的示例路由:

global_rules:

elasticsearch-logger:

endpoint_addr: "http://elasticsearch:9200"

field:

index: "gateway"

type: "logs"

ssl_verify: false

timeout: 60

retry_delay: 1

buffer_duration: 60

max_retry_count: 0

batch_max_size: 5

inactive_timeout: 5

services:

- name: httpbin Service

routes:

- uris:

- /ip

name: getting-started-ip

upstream:

type: roundrobin

nodes:

- host: httpbin.org

port: 80

weight: 1

将配置同步到 APISIX:

adc sync -f adc-elasticsearch.yaml

- Gateway API

- APISIX CRD

创建一个 Kubernetes 清单文件以全局启用 elasticsearch-logger:

apiVersion: apisix.apache.org/v1alpha1

kind: GatewayProxy

metadata:

namespace: ingress-apisix

name: apisix-config

spec:

plugins:

- name: elasticsearch-logger

enabled: true

config:

endpoint_addr: "http://elasticsearch:9200"

field:

index: "gateway"

type: "logs"

ssl_verify: false

timeout: 60

retry_delay: 1

buffer_duration: 60

max_retry_count: 0

batch_max_size: 5

inactive_timeout: 5

为你要收集日志的示例路由创建另一个 Kubernetes 清单文件:

apiVersion: v1

kind: Service

metadata:

namespace: ingress-apisix

name: httpbin-external-domain

spec:

type: ExternalName

externalName: httpbin.org

---

apiVersion: gateway.networking.k8s.io/v1

kind: HTTPRoute

metadata:

namespace: ingress-apisix

name: getting-started-ip

spec:

parentRefs:

- name: apisix

rules:

- matches:

- path:

type: Exact

value: /ip

backendRefs:

- name: httpbin-external-domain

port: 80

创建一个 Kubernetes 清单文件以全局启用 elasticsearch-logger:

apiVersion: apisix.apache.org/v2

kind: ApisixGlobalRule

metadata:

namespace: ingress-apisix

name: global-elasticsearch

spec:

ingressClassName: apisix

plugins:

- name: elasticsearch-logger

enable: true

config:

endpoint_addr: "http://elasticsearch:9200"

field:

index: "gateway"

type: "logs"

ssl_verify: false

timeout: 60

retry_delay: 1

buffer_duration: 60

max_retry_count: 0

batch_max_size: 5

inactive_timeout: 5

为你要收集日志的示例路由创建另一个 Kubernetes 清单文件:

apiVersion: apisix.apache.org/v2

kind: ApisixUpstream

metadata:

namespace: ingress-apisix

name: httpbin-external-domain

spec:

ingressClassName: apisix

externalNodes:

- type: Domain

name: httpbin.org

---

apiVersion: apisix.apache.org/v2

kind: ApisixRoute

metadata:

namespace: ingress-apisix

name: getting-started-ip

spec:

ingressClassName: apisix

http:

- name: getting-started-ip

match:

paths:

- /ip

upstreams:

- name: httpbin-external-domain

将配置应用到你的集群:

kubectl apply -f global-elasticsearch.yaml -f httpbin-route.yaml

自定义日志格式

作为可选步骤,你可以自定义 elasticsearch-logger 的日志格式。大多数 APISIX 日志插件的日志格式可以在插件上本地自定义(例如绑定到路由),也可以使用 插件元数据 全局自定义。

使用 内置变量 将主机地址、时间戳和客户端 IP 地址添加到日志中:

- Admin API

- ADC

- Ingress Controller

curl "http://127.0.0.1:9180/apisix/admin/plugin_metadata/elasticsearch-logger" -X PUT -d '

{

"log_format":{

"host":"$host",

"timestamp":"$time_iso8601",

"client_ip":"$remote_addr"

}

}'

plugin_metadata:

elasticsearch-logger:

log_format:

host: $host

client_ip: $remote_addr

timestamp: $time_iso8601

将配置同步到 APISIX:

adc sync -f adc-plugin-metadata -f adc-elasticsearch.yaml

- Gateway API

- APISIX CRD

更新你的 GatewayProxy 清单以包含 elasticsearch-logger 插件元数据配置:

apiVersion: apisix.apache.org/v1alpha1

kind: GatewayProxy

metadata:

namespace: ingress-apisix

name: apisix-config

spec:

provider:

type: ControlPlane

controlPlane:

# 你的控制面连接配置

# ....

pluginMetadata:

elasticsearch-logger: {

"log_format":{

"host":"$host",

"timestamp":"$time_iso8601",

"client_ip":"$remote_addr"

}

}

将配置应用到你的集群:

kubectl apply -f elasticsearch-plugin-metadata.yaml

配置 Kibana

向路由发送一些请求以生成访问日志条目:

for i in {1..10}; do

curl -i "http://127.0.0.1:9080/ip"

done

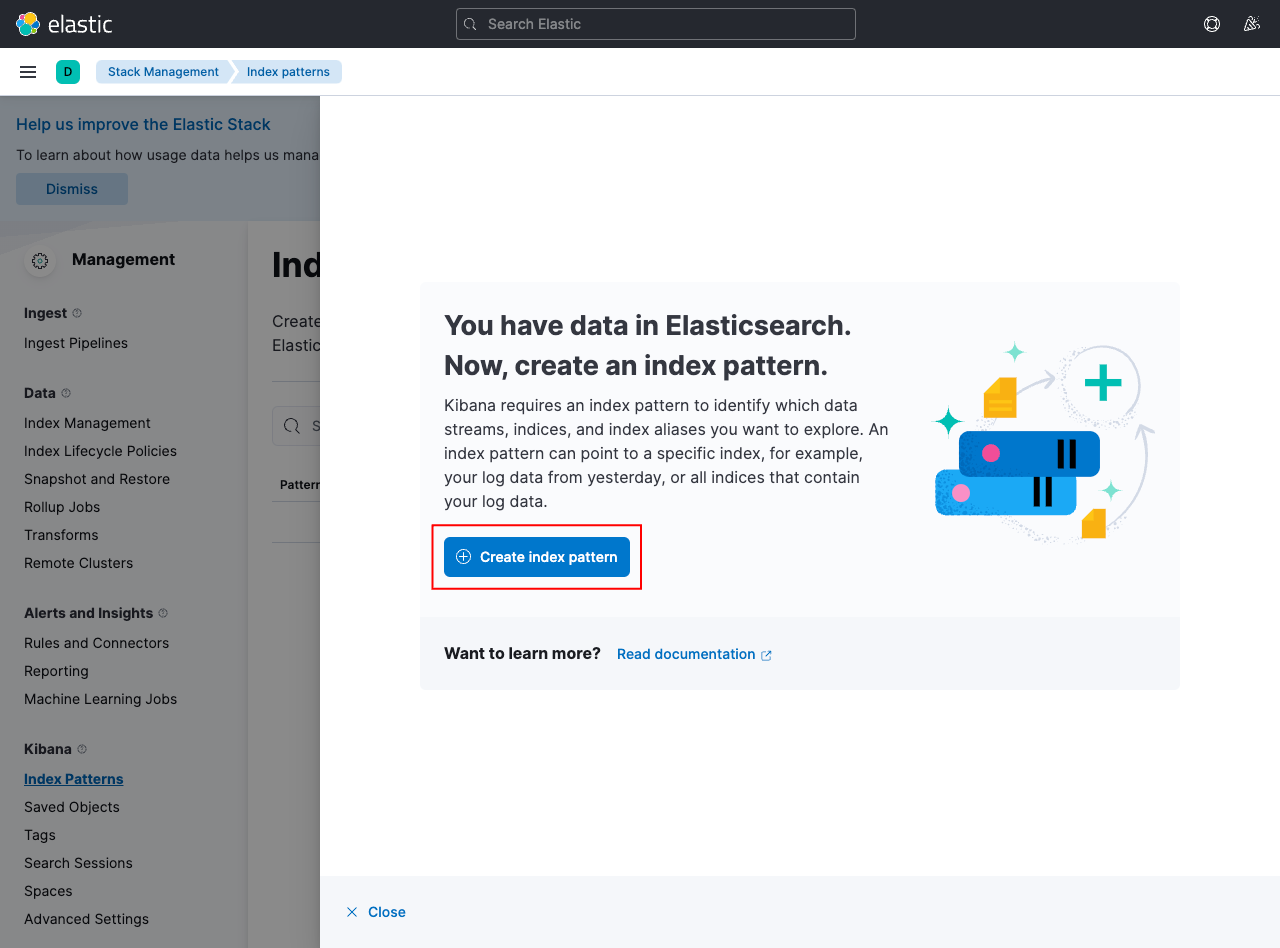

在 localhost:5601 上打开 Kibana 仪表板,然后从菜单中单击 Discover 选项卡。创建一个新的索引模式以从 Elasticsearch 获取数据:

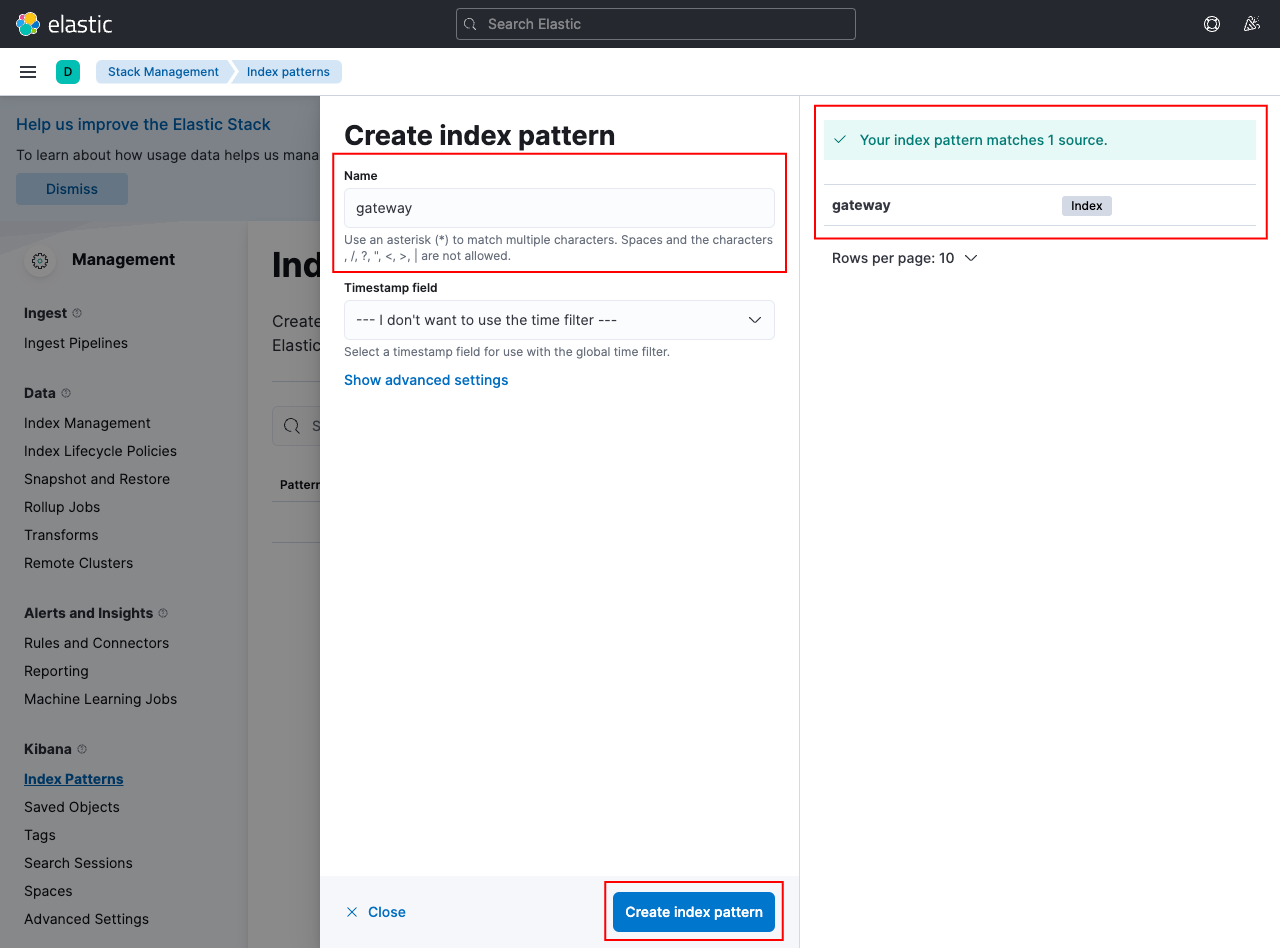

创建一个模式 gateway 以匹配 Elasticsearch 中的索引数据:

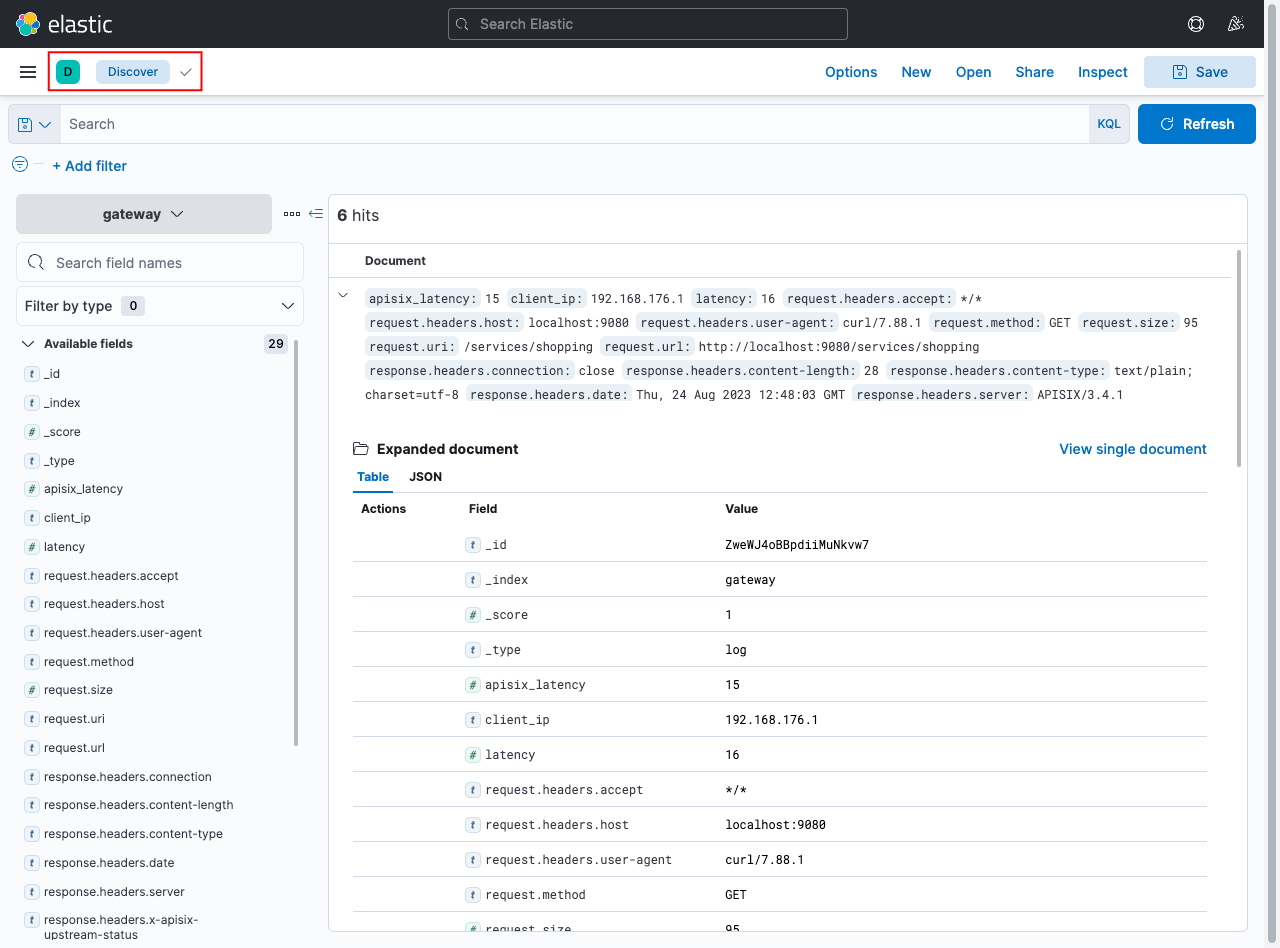

如果你的配置正确,你可以返回 Discover 选项卡并查看来自 APISIX 的日志:

下一步

参阅 elasticsearch-logger 插件参考以了解更多有关插件配置选项的信息。